Web API Pagination with the 'Timestamp_Offset_Checksum' Continuation Token

Posted on Dec 13, 2017. Updated on Jan 20, 2019

Implementing proper pagination for Web APIs is not trivial. Offering limit and offset query parameters doesn’t cut it because this approach is slow and error-prone. But also keyset paginations with parameters like modified_since have drawbacks. Fortunately, there is a better approach: Continuation Tokens (aka Cursors). They provide fast, reliable and stateless pagination and make the client implementation very straight-forward. In this post, I propose the Timestamp_Offset_Checksum continuation token, the algorithm and point to a library simplifying its implementation.

TL;DR

- Offset pagination is slow and error-prone.

- Simple keyset pagination is fast, but also have some drawbacks especially when many elements have the same timestamp.

- A “Continuation Token” solves this issue.

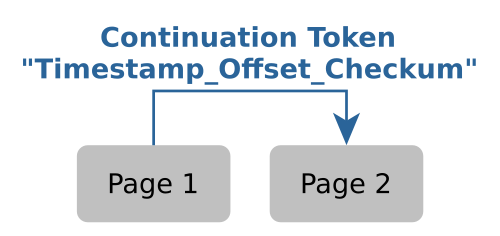

- It points to the last element of the delivered page

- It’s generated by the server and passed to the client. The client sends the token back to the server to get the next page. With the token, the server knows where to he has to continue.

- Format:

Timestamp_Offset_Checksum - It provides fast, reliable and stateless pagination

- The implementation is tricky as there are many corner cases. Proper testing is required.

- We at Spreadshirt wrote the small JVM library continuation-token helping to implement this approach.

- Update 2018: I don’t recommend the

Timestamp_Offset_Checksumapproach any longer. Instead, use theTimestamp_IDapproach.

Other Pagination Approaches

For better understanding, let’s recap two common approaches for Web API pagination.

Offset Pagination

A common way is offset pagination. The client uses the parameters limit and offset to page through the elements.

GET /elements?limit=100 => the client receives the first 100 elements.

GET /elements?limit=100&offset=100 => the client receives the next 100 element.

GET /elements?limit=100&offset=200 => the client receives only 50 elements. So that's the end.

Some APIs use the parameters pageNumber and pageSize instead of limit and offset. But it’s basically the approach.

The drawbacks:

- Slow. An issue here is the

OFFSETclause that is used in the server’s database query. It’s incredibly slow when it comes to tables with millions of entries. There is no index involved that may speed up our queries. - Unsafe. It’s really easy to miss elements if the element order changes during two requests. If an element of a previous request is deleted, one element of the following page moves up to a page that has already been delivered.

Side note: Often, the server sends the total amount of all elements in the response (total or count). This may appear handy. But in practice, this has performance impacts as an additional expensive COUNT has to be executed against the database. But most important, most clients don’t need this information anyway. They are only interested in the next page (and maybe the previous one). Random page access is rarely required (e.g. “give me the 24. of 230 pages”). In this case, the client would need to know the total amount of elements to calculate the number of possible pages.

Keyset Pagination

A better approach is leveraging an indexed column (usually a timestamp column like date_created or date_modified). The client uses the timestamp of the last element in the last page as the parameter for the next request.

GET /elements?pageSize=100

=> the client receives the oldest 100 elements. The last element of the page has the `date_modified` field with 1504224000 (= Sep 1, 2017 12:00:00 AM)

GET /elements?pageSize=100&modifiedSince=1504224000

=> the client receives the 100 elements since 1504224000. The last element of the page was modified on 1506816000. And so on.

This approach is easy to implement, fast and should be good enough for many use cases. But there are still drawbacks:

- Endless loops. We can end up in endless loops if all elements of a page have the same timestamp. In practice, this can easily happen after a bulk update of many elements. The only thing we can do here is to make endless loops less likely by:

- Always use a high page size.

- Use timestamps with millisecond precision (and we can’t take that for granted. For instance, before MySQL 5.6.4 there were only

timestampcolumns with second precision).

- Not efficient. The client may receive and process the same elements multiple times when many elements have the same timestamp and they are overlapping two pages.

Fortunately, we can improve the keyset pagination by adding more information to the key/timestamp. One example is the Timestamp_Offset_Checksum token.

Update 2018

In the following, I’ll present the Timestamp_Offset_Checksum token approach. The algorithm works pretty fine in most of the cases. However, it becomes extremely slow, when a huge amount of elements (> 15 000) have the same timestamp. That’s why we migrated to the Timestamp_ID token for pagination. As of today, we are very happy with it and I strongly recommend to adopt the Timestamp_ID approach instead of the Timestamp_Offset_Checksum token. However, I’ll keep the following description online for the sake of documentation and inspiration. Check out the drawbacks section for a detailed description of our reasons.

Structure of the Timestamp_Offset_Checksum Continuation Token

- The token is sent to the client in the payload (

continuationTokenfield in json). The client passes it as a query parameter for the next page (continuationTokenquery parameter). - The token points to the last element of the delivered page.

- Format:

Timestamp_Offset_Checksum- The

Timestampof the last element of the page. It’s used to know where the next page should start. Normal keyset pagination so far. - The

Offsetpoints to the position of the element relative to the first item with the same timestamp. For instance, the offset is1if there is only one element with a timestamp x. It’s2if there is an element before the last one with the same timestamp. - The

Checksum(IDs)is calculated over all IDs with the same timestamp from position 0 up to the position of the last element. It’s used to detect changes during pagination and to trigger a fallback in those cases.

- The

The Pagination Algorithm

Let’s start with a simple case: Assume that we have 6 elements all having different timestamps (1..6). Besides, assume a page size of 3.

All elements have different timestamps.

This is the happy path. The first page is returned containing 3 elements and the continuation token 3_1. The token points to the last element of the page. So read 3_1 as “the last element of the page is the first element with the timestamp 3”. The client sends the received token 3_1 back to the server in order to get the next page. By looking at the token, the server knows that the client already saw one element with the timestamp 3. So it queries the database for all elements with timestamp >= 3 and skips the first element. The first element of page 2 starts with timestamp 4. And so on.

Now it gets interesting. Assume that we have several elements with the same timestamp 2 and they are overlapping two pages:

Multiple elements with the same timestamp 2 are overlapping two pages. The offset is important to distinguish between the elements with the same timestamps.

This is where the offset enters the stage. The server returns the continuation token 2_2 because the last element of the first page is the second element with the timestamp 2. When asked for the next page, the server queries for timestamp >= 2 and skips two elements. This way, the first and seconds element with timestamp 2 are not delivered again to the client. Page 2 starts with the third element with that timestamp.

Still easy, right? But what if all elements of one or more pages have the same timestamp 1? This would lead to endless loops with primitive keyset pagination. Fortunatelupday, the offset helps here again.

Even if all elements have the same timestamp, we don’t run into endless loops.

I guess the pattern is clear. Same timestamps are no problem at all because the offset carries the position of the element within the list of elements with the same timestamp. The token 1_3 points to the third element with the timestamp 1. And 1_6 points to the sixth element. And so on.

Now it gets really interesting! What if an element changes between two pages? Let’s say its timestamp got updated and so it moves right to the bottom. Skipping can now be dangerous because we may miss elements. This is where the third part of the continuation token comes into play: the checksum.

The checksum is used to detect changes in the element order between two page requests. In those cases, a fallback is performed.

From now on, we have to also consider the IDs of the elements and not only the timestamps. The first page contains the elements with the IDs 1, 2, 3 having the timestamps 1, 2, 2. A CRC32-checksum is calculated with all IDs of the elements with the timestamp of the last item. So checksum(2, 3) is performed, because both elements 2 and 3 have the same timestamp 2. Page 1 got delivered to the client. So far so good. But let’s assume that now element 3 got updated before the client requests page 2. It gets a current timestamp and moves down to the very end. If the server would now simply skip two elements, we would miss element 4, because it moves up to the place where element 3 has been. Fortunately, the server calculates the checksum with checksum(2, 4) which results in a different checksum than the value he received from the client (which was checksum(2, 3)). Now the server knows that a change in the order happened during the two requests. In this cases a fallback is performed: The server delivers all elements with the timestamp 2. This way, element 4 is not missed. But it also means that the client may see elements twice (like element 2). He has to get along with it.

Conditions

- There has to be an index on both the ID and the timestamp column.

- The IDs have to be unique.

- We have to sort after both the timestamp and the ID in every query. This way, we get a constant order even in case of equal timestamps.

- It can happen that we deliver an element multiple times during one pagination run (in case of timestamp changes and moving elements). The client has to get along with this; he has to be idempotent.

Optimization

Timestamps with second precision are fine. But I highly recommend using at least millisecond precision. First, this reduces the number of skips (the offset is more likely to be 1). So the server queries fewer entries from the database in vain (those that got skipped by the algorithm). And second, we’ll have fewer fallbacks due to unequal checksums. So fewer elements are delivered twice to the client.

Features

Advantages

- Fast. We use only indexed columns and avoid the slow

offsetclause. Besides, we don’t execute an additional and expensivecountquery. - Reliable.

- No elements are missed even if elements are changed during a pagination run.

- We can’t run into endless loops; even with small page sizes and timestamp columns with second precision.

- Good Efficiency. Due to the skipping (the

offset), we reduce the number of elements that are delivered more than one time. It’s way better than using only a timestamp (simple keyset-pagination). However, in case of fallbacks, elements can be delivered multiple times. - Stateless. No state on the server-side is required. So every server instance can handle the requests. This eases horizontal scaling.

- Easy client implementation and evolvability. The client doesn’t have to fiddle around with limit and offset calculations. He just passes the received token back to the server - as a black box. He doesn’t have to analyze or understand the token. This, in turn, makes later changes in the token structure easy.

- No Expiration. The token is always valid. We can stop a pagination run at any time or position and resume later. If our client or the service crashes during a pagination run, we can easily resume at the point we stopped. We won’t miss any element. We only have to persist the current token after each request for a page.

Drawbacks

- The correct implementation is non-trivial. There are many corner cases that have to be taken into account. Proper unit testing is absolutely required.

- Performance issues when a huge amount (let’s say > 15 000 elements) have the same timestamp. In this case, the

offsetpart of the token becomes pretty huge and so thelimitclause of the SQL query does because it’s calculated with the offset (offset+pageSizein order to calculate the checksum). Unfortunately,limitdoesn’t scale well. The query execution and data transfer will take longer and longer because we have to transfer more and more data to the algorithm in the application layer. Finally, we’ll end up with responses getting slower and slower and finally timing out. Please mind that having this amount of same timestamps is not unrealistic if you run bulk updates. Bottom line: If you are facing more than, let’s say, 15 000 elements with the same timestamp you should check out the TI token approach instead. Besides, it’s much easier to implement. - In case of a fallback due to a checksum inequality, we may deliver multiple elements multiple times to the client (depending on the number of elements with the same timestamp). So not only the element that was updated is delivered twice.

Implementation

We at Spreadshirt created the small Java/Kotlin library continuation-token which helps to implement the above algorithm. We put a lot of effort into proper testing in order to find all bugs and corner cases.

Contribution and Links

- The post “Smart Feeds” by Justin Karneges from Fanout proposes the described approach (he calls the token “cursor”). We adopted his approach and created an open source implementation for it. Thank you so much for the inspiration!

- The post “Nearly all web APIs get paging wrong” by Joannes Vermorel points to the pitfalls of Web API pagination. We’ve borrowed the term “Continuation Token” from him. Thanks!